Read-Later App with MCP: Save Articles, Feed Your LLM Context

I save 40 articles a week. My Claude reads them so I don't have to.

That's not a metaphor. Burn 451 runs a local Model Context Protocol server — burn-mcp-server on npm — that exposes your reading vault to Claude Desktop, Cursor, and any other MCP-compatible AI client. When you ask Claude a question that touches something in your saved articles, it pulls from your vault. Your reading queue stops being a list of things you meant to read and starts being a live knowledge base your AI works from.

This article covers what that integration actually looks like, how to set it up in under five minutes, and the three workflows where it changes how you work. If you want context on MCP itself first, the Anthropic MCP documentation is the authoritative reference.

Why "read-later" and "MCP context" belong in the same sentence

The failure mode of every read-later app is the same: the save rate outpaces the read rate. You save 10 articles on Monday. You open two by Wednesday. By Friday there are 8 more. The queue grows. You start skipping it. The app becomes a bookmark graveyard.

The conventional fix — better reminders, spaced repetition, email digests — treats the symptom. The underlying problem is that the value of an article is locked inside a linear reading experience. You have to read it to get the value. If you don't have time, you get nothing.

MCP breaks that constraint. Once your articles are accessible as LLM context, you don't need to read them to benefit from them. Save an article about a new vector search approach on Monday. Don't read it. On Thursday, ask Claude to help you design a search feature — and it surfaces that article from your vault, extracts the relevant parts, and folds them into its answer. You got value from the article without ever opening it.

This reframes what "read later" means. Not "save and forget." Save and feed your LLM context.

For a broader comparison of read-later apps that don't have this integration, the best read-later app 2026 guide covers ten tools head-to-head.

What burn-mcp-server actually is

burn-mcp-server is a stdio MCP server. It runs locally on your machine, communicates over stdin/stdout with the AI client that launches it, and exposes a search_vault tool that queries your Burn 451 reading vault by keyword or semantic similarity.

The server has approximately 396 weekly downloads on npm. It's open source. Nothing proxies through a third-party service — your queries go from the AI client to the local process to the Burn 451 API directly.

The three things it can do:

- •search_vault(query) — keyword and semantic search across everything you've permanently saved to your vault

- •get_article_content — retrieve full article content and AI analysis for a saved article

- •list_vault / list_sparks / list_flame — list bookmarks by stage (permanent vault / recently-read / active inbox)

The technical pattern behind this is covered in depth in Building Burn MCP: 3 patterns for a stdio read-later server.

Setup: Claude Desktop in under 5 minutes

You need a free Burn 451 account and Claude Desktop (or another MCP-compatible client). No paid plan, no API key purchase.

Step 1: Install Claude Desktop from modelcontextprotocol.io if you haven't already.

Step 2: Open the config file. On macOS: ~/Library/Application Support/Claude/claude_desktop_config.json. On Windows: %APPDATA%\Claude\claude_desktop_config.json.

Step 3: Add the burn entry.

{

"mcpServers": {

"burn": {

"command": "npx",

"args": ["-y", "burn-mcp-server"],

"env": {

"BURN_API_TOKEN": "your_token_here"

}

}

}

}Get your API token from your Burn 451 settings page. Paste it in place of your_token_here.

Step 4: Restart Claude Desktop. A hammer icon should appear in the toolbar. Click it — you should see burn listed as an available MCP server with the three tools above.

Total time: under five minutes. The server starts on demand when Claude needs it — nothing is running in the background when you're not using Claude.

For Cursor users: add the same config to .cursor/mcp.json in your project root. The entry format is identical.

"I install MCP servers to glue my reading flow into Claude — read-later finally feels useful"

That's a direct quote from a developer who set up the integration in January. The core observation is right: MCP is a glue protocol. It connects the tools you already use — your read-later app, your calendar, your code editor — into a single AI interface. Read-later has been siloed from the rest of the knowledge workflow for a decade. MCP ends that.

The pattern that makes this feel different in practice: you stop thinking "I should read that article before I start this project" and start thinking "I'll save relevant articles and let Claude pull them when needed." The saving behavior doesn't change. The reading requirement disappears. The vault becomes a background knowledge layer rather than a reading obligation.

For context on how Burn compares to apps that don't have this integration, Burn 451 vs Readwise Reader covers the full comparison — including where Readwise's in-app AI beats what MCP gives you.

3 workflows where MCP read-later changes how you work

1. Research-augmented writing

You're writing a piece on AI agent architectures. Over the past month you saved 12 articles on the topic — some you read, some you didn't. Instead of opening each one, you ask Claude: "Search my vault for articles about agent memory and tool use. Summarize the main patterns."

Claude calls search_vault("agent memory tool use"), retrieves 6 relevant articles with their AI summaries, and gives you a synthesis. You didn't read all 6. You didn't need to. Your past saving behavior did the research for your future self.

2. Context injection for coding sessions

You're in Cursor working on a new feature. You saved a paper about the algorithm you're implementing but haven't read it carefully. Add a Cursor rule: "When I ask about [topic], first check my Burn vault for relevant context." Cursor calls the MCP server, pulls the paper's content and summary, and includes it in the coding session context.

This is the pattern covered in Building Burn MCP: 3 patterns — specifically the "vault-as-background-context" pattern where the server is called implicitly as part of system instructions.

3. Pre-meeting intelligence

Before a call with someone from a company or field you've been following, ask Claude: "Search my vault for anything I've saved about [company/topic] in the last 30 days." Claude pulls recent saves with summaries. You walk into the meeting having effectively reviewed your own curated reading on the topic — without opening any of the articles.

The underlying article on how the vault architecture enables this is the best AI summarizer for articles — which covers how Burn generates the per-article summaries that make vault search useful.

How Burn 451 is different from other read-later apps with AI

Several read-later apps have AI features. None of them have an MCP server. The distinction matters because "AI features inside the app" and "your reading vault as an MCP tool" are architecturally different:

- •In-app AI — you go to the app, use AI there. Value is contained in one product.

- •MCP server — your reading vault is a live data source for any AI client. Value flows wherever you do AI work.

Readwise Reader has Ghostreader, daily digests, and in-app Q&A. It's excellent. But if you're in Cursor or Claude Desktop doing real work, Readwise can't inject your reading history there. Burn's MCP server can. The Burn vs Readwise comparison goes deep on this tradeoff.

The broader read-later app landscape — including apps that don't have MCP or AI at all — is in the best read-later app 2026 guide.

What MCP is and why it matters for read-later apps specifically

The Model Context Protocol is an open standard that defines how AI clients call external tools. It's to AI what HTTP is to web browsers — a common protocol that means any MCP client can work with any MCP server without custom integration work.

The protocol uses a server/client model where the server (like burn-mcp-server) runs as a local process and communicates via stdin/stdout. The AI client (Claude Desktop, Cursor) launches the server on demand and sends JSON-RPC messages to call tools. There's no web server, no port, no cloud middleware — it's a local process that starts when needed and exits when done.

For read-later apps specifically, MCP solves a problem that RAG-over-bookmarks never quite did: it gives you structured tool calls, not just semantic search. You can ask "what are my 5 most recent saves about machine learning" and get a precise answer. You can ask "get the full content of the article about Mixture of Experts I saved last week" and get the full text. The structured query interface is more useful than embedding similarity alone.

The deep technical architecture of the Burn MCP server — transport layer, tool schema, auth — is in Building Burn MCP: 3 patterns for a stdio read-later server.

The vault: what gets exposed to MCP and what doesn't

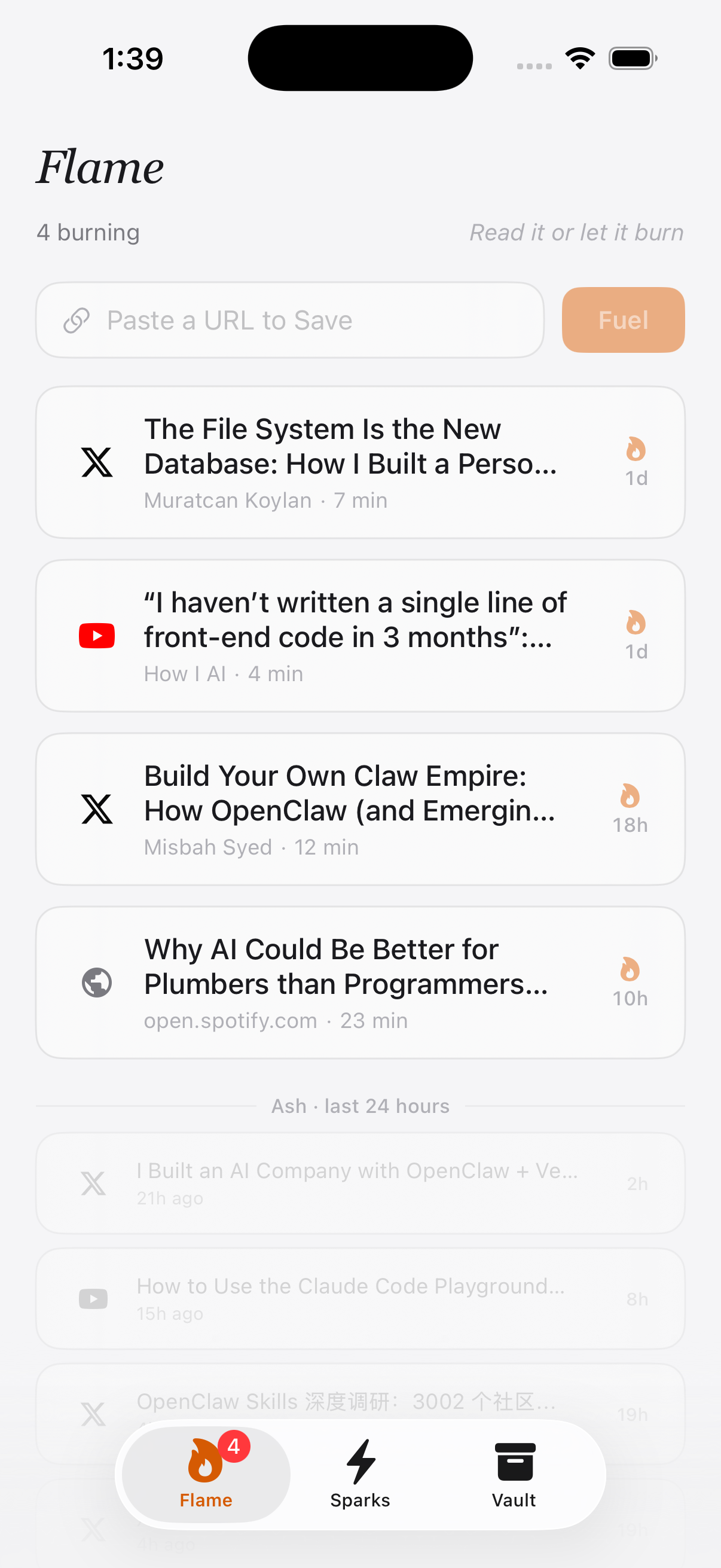

Burn 451 has two storage layers: the inbox and the vault. The inbox is the 24-hour reading queue — articles that expire if you don't read them. The vault is permanent saves — articles you finished and chose to keep.

The MCP server exposes the vault only, not the inbox. This is intentional: the inbox is transient by design, and exposing it to AI context would mean the AI has access to articles you haven't decided to keep yet. The vault is curated signal — everything in it is something you considered worth keeping after reading. That makes it much higher quality as a knowledge base than a raw save queue.

This also means the vault compounds over time. The more you save and read, the more context your AI has. After six months of using Burn, your Claude has access to hundreds of curated articles on the topics you care about. That's a significantly different knowledge base from what it has from training data alone.

On the iOS side, every article you finish in the Burn app goes to vault automatically. The read-later app comparison covers the save-to-vault flow in detail.

Burn 451 as an MCP read-later app: what it is and what it isn't

Burn 451 is an iOS app with a native MCP server. It is:

- •A read-later queue with a 24-hour deadline on saves (read it or lose it)

- •A permanent vault for finished articles, with AI summaries on each

- •A stdio MCP server that exposes your vault to Claude, Cursor, and other MCP clients

- •Free — no paid plan required for MCP access

It is not:

- •An Android app (iOS only right now)

- •A bulk archive tool — no import from Pocket or Instapaper

- •A replacement for Readwise Reader's in-app annotation and spaced repetition features

If the MCP integration is the feature you care about and you're on iOS, this is the only read-later app that gives you that. If you're on Android or need bulk archive import, the best read-later app 2026 guide covers alternatives.

Frequently asked questions

What is a read-later app with MCP support?

A read-later app with MCP support exposes your saved articles as a Model Context Protocol server — meaning any MCP-compatible AI client (Claude Desktop, Cursor, Windsurf, etc.) can query your reading vault directly. Instead of manually copying article text into a chat window, the AI client pulls content from your saved library on demand. Burn 451 is currently the only read-later app with a native MCP server available on npm.

How do I connect Burn 451 to Claude Desktop via MCP?

Add the burn-mcp-server entry to your Claude Desktop config file (~/Library/Application Support/Claude/claude_desktop_config.json on macOS). The entry is: { "mcpServers": { "burn": { "command": "npx", "args": ["-y", "burn-mcp-server"] } } }. Restart Claude Desktop after saving. Claude will then have access to a "search_vault" tool that queries your Burn 451 reading vault.

What is the Model Context Protocol (MCP)?

MCP is an open standard introduced by Anthropic in late 2024 that defines how AI clients (like Claude Desktop or Cursor) connect to external data sources and tools. Instead of copy-pasting content into chat, you run a local stdio server that the AI client calls at inference time. This means the AI has access to live, structured data — your bookmarks, your calendar, your code — without manual context injection. The spec is published at modelcontextprotocol.io.

Does burn-mcp-server work with Cursor and other MCP clients?

Yes. burn-mcp-server is a standard stdio MCP server that works with any client implementing the MCP spec: Claude Desktop, Cursor, Windsurf, Continue.dev, and others. The configuration syntax varies slightly by client but the server entry point is the same — `npx -y burn-mcp-server`. Cursor users add it under the .cursor/mcp.json file in the project root.

Why connect a read-later app to an LLM at all?

Most people save 30-50 articles a week and read maybe 20% of them. The other 80% sits in a queue that becomes background guilt. MCP integration changes the value proposition: even articles you didn't finish reading can feed your LLM context. You save an article about a new architecture pattern, never get around to it, but two weeks later ask Claude to help you architect something — and it surfaces that article from your vault. The save becomes useful without requiring you to read it.

Is burn-mcp-server free and open source?

Yes. The burn-mcp-server npm package is free and open source. It has approximately 396 weekly downloads on npm. It communicates with the Burn 451 API using your account credentials, which means you need a free Burn 451 account. The server itself runs locally via npx — nothing is uploaded or proxied through a third-party service.

What can I do with my reading vault once it's connected via MCP?

Three workflows are most common: (1) Research assistant — ask Claude to find what you've saved on a specific topic before writing or coding. (2) Pre-meeting prep — query your vault for context on a person, company, or technology before a call. (3) Passive knowledge base — let Claude search your vault as background context when answering complex questions, so your reading history enriches its responses without you curating it manually.

How is this different from Readwise Reader's AI features?

Readwise Reader has AI features (Ghostreader, summaries, Q&A) but they're contained inside the Readwise app — you get AI on top of your reading, not AI that's accessible from your coding environment or external chat client. Burn 451's MCP server makes your vault a live data source for any MCP client, which means it works wherever your AI client works: inside Cursor while you're coding, inside Claude Desktop while you're writing, inside any MCP-compatible tool. The integration model is fundamentally different.

Related reading

- •Best Read-Later App 2026: 10 tools tested

- •MCP Read-Later Server: how the burn-mcp-server works under the hood

- •Building Burn MCP: 3 patterns for a stdio read-later server

- •Burn 451 vs Readwise Reader: which is better for AI workflows?

- •Best AI summarizer for articles: how Burn generates vault summaries

- •Pocket Alternative App 2026: 6 Mobile Read-Later Apps Compared

The only read-later app with a native MCP server. Free on iOS — connect your reading vault to Claude in under 5 minutes.